The next iPhone system update will include new features designed to assist folks who have difficulty speaking — by replicating their own voices with artificial intelligence.

Personal Voice, which replicates voices, and Live Speech, which allows people to type words that can be spoken out loud, will be part of a suite of new accessibility features arriving with the operating system iOS 17 this fall.

“Accessibility is part of everything we do at Apple,” said Sarah Herrlinger, Apple’s senior director of Global Accessibility Policy and Initiatives, in an Apple blog post. “These groundbreaking features were designed with feedback from members of disability communities every step of the way, to support a diverse set of users and help people connect in new ways.”

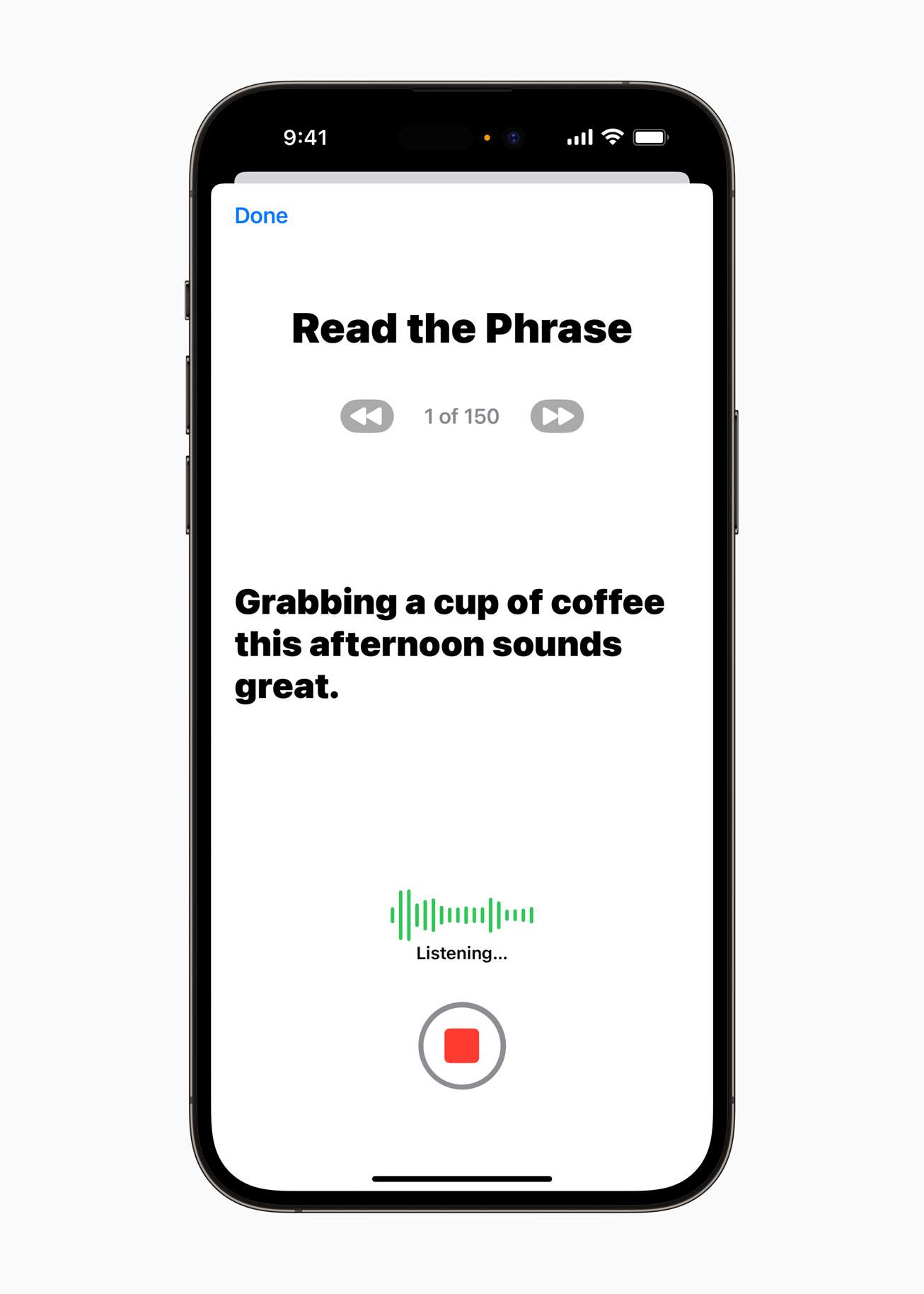

The Personal Voice feature requires users to complete a 15-minute audio sample, consisting of a randomized series of statements read aloud. The app then creates a simulation of the user’s voice with the speech data.

It works seamlessly with Live Speech, so people can use the voice re-creation to speak on phone and video calls by typing whatever they want to say. They can also use it for in-person communication.

The idea is that folks who already have, or may experience, difficulty speaking will now be able to communicate with their own voice, instead of Siri or another generic computer voice.

Of course, the “AI” shorthand conjures up some unsettling ideas — what if someone accesses and uses your simulated voice for nefarious purposes?

Apple insists that guardrails are in place. For instance, the internet is not used in the creation of voice simulations; it’s all done within the device itself. And Apple won’t have access to voice data, either, since it’ll remain un-linked to your Apple ID unless you opt in.

MORE: Study shows new tool detects AI-produced content with 99% accuracy

The new accessibility suite, dubbed Assistive Access, offers more customization in popular apps, allowing people with a variety of cognitive and physical disabilities to create an easier, more helpful user experience.

An updated Magnifier app will also help low-vision and blind users get a better view of everyday items. But Personal Voice and Live Speech are drawing the most attention, especially for folks who need help speaking.

“If you can tell [friends and family] you love them, in a voice that sounds like you, it makes all the difference in the world — and being able to create your synthetic voice on your iPhone in just 15 minutes is extraordinary,” said Lou Gehrig’s disease (ALS) advocate Philip Green in the Apple post.

This story originally appeared on Simplemost. Check out Simplemost for additional stories.